Building trust and avoiding risk: How Fieldwire approaches AI in construction

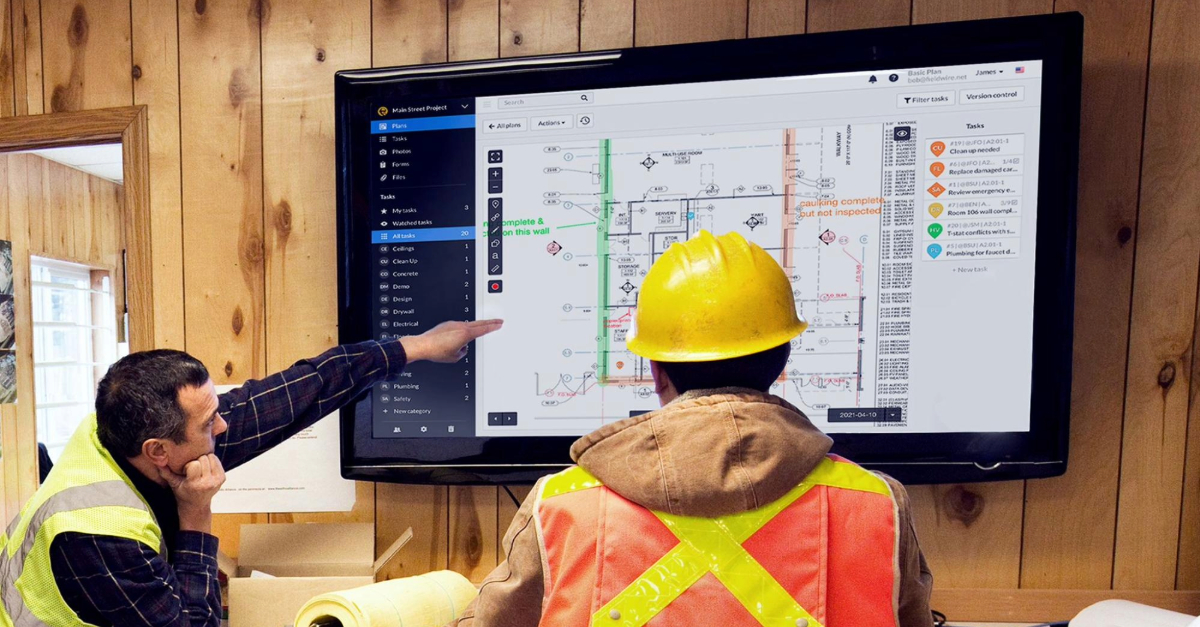

Construction teams are already using AI in small, practical ways: drafting RFIs from site notes, tagging jobsite photos, searching across documents in seconds, or summarizing daily reports. These tools can save time and reduce manual work, but with this transformation comes understandable concerns.

Construction projects involve highly sensitive information: project plans, specifications, budgets, schedules, and personal data. At the same time, AI systems can be unpredictable if not carefully designed, raising questions around accuracy, security, and accountability.

At Fieldwire, we believe AI should empower teams while maintaining the highest standards of privacy, reliability, and transparency. That’s why we’ve built our AI approach around clear principles designed specifically for the realities of construction workflows.

Below, we break down the most common AI risks in construction, and how Fieldwire addresses them.

Key takeaways

- AI is already used on jobsites for RFIs, photo tagging, and document search

- The main risks are data privacy, accuracy, security, and lack of transparency

- Construction workflows require stricter safeguards than most industries

- AI should assist decisions, not replace human judgment

- Fieldwire designs AI features with privacy, control, and reliability at the core

Data privacy: Protecting sensitive project information

The most significant concern around AI in construction is data leakage. Projects involve confidential documents, personal information, and business-critical insights that must never be exposed to the public, third parties, or even other customers. Common risks include:

- Leaking private customer files such as plans and specifications

- Exposing personally identifiable information (PII)

- Accidentally sharing data across projects or accounts

Fieldwire’s approach is to limit risk at the source. AI features only access the minimum amount of data required for a specific task. For example, only the text currently being drafted is processed, while a document search is restricted to data within the same project.

Data is never used to train models without explicit authorization, and information is anonymized when needed to prevent identification of individuals, locations, or clients. This includes masking sensitive elements such as identifiable areas or organization-specific details in documents.

Project data remains isolated at every level, ensuring that information from one customer or project is never exposed to another.

Read more insights on AI in construction on our blog: AI in construction: Understanding its real impact on the jobsite

Reliability and accuracy: Preventing hallucinations and reducing the risk of costly mistakes

AI systems can generate outputs that appear reliable but are incorrect, a phenomenon often referred to as hallucination. In construction, even minor inaccuracies can lead to rework, delays, or budget impacts.

To address this, Fieldwire focuses on strict evaluation and validation. AI outputs are tested against defined accuracy benchmarks before being deployed. Features rely on confidence thresholds, meaning results are only shown when they meet a validated level of reliability. For example, photo tags are applied only when the system reaches a high confidence score based on tested datasets.

Equally important, AI outputs are clearly presented as suggestions. Users are expected to review and validate results before taking action. The goal is to reduce manual effort without removing human oversight.

Security: Defending against malicious use

Beyond accidental errors, AI systems can be targeted by malicious users attempting to manipulate outputs, access hidden data, or trigger unintended actions. These risks are particularly relevant in collaborative environments like construction, where multiple stakeholders interact with shared data.

Fieldwire addresses this by limiting how AI systems can be used. Inputs are filtered and sanitized to prevent unsafe or irrelevant requests, and prompts are constrained to reduce the risk of manipulation. Each AI request runs in isolation, with no retained memory of previous interactions.

Access to data is also tightly controlled. AI systems retrieve only the context required for a task, typically at the project level, and never across customers. These safeguards are reinforced through external security reviews and controlled training datasets aligned with construction use cases.

Transparency and explainability: Making AI outputs verifiable

A major challenge with AI is the “black box” problem, where systems produce answers without explaining how or why they reached them. This can lead users to follow suggestions blindly, even when they don’t fit the project context. In construction, this lack of clarity can lead to misplaced trust or incorrect decisions.

Fieldwire prioritizes visibility. AI features retrieve relevant project data before generating outputs, and the supporting information is surfaced directly in the interface when possible. This can include the specific plans, tasks, or documents used to generate a response.

By making the source of information visible (for example, showing the exact tasks, plans or files referenced in a result), teams can quickly verify whether an output aligns with the project context.

Governance and accountability: Keeping humans in control

AI can generate content quickly, but responsibility for decisions must remain clearly defined. Without proper safeguards, there is a risk that users rely too heavily on automated outputs or that actions are taken without sufficient validation.

Fieldwire maintains a clear boundary: AI assists, but users decide. All AI-generated content is explicitly labeled, and nothing is finalized without user review, editing, and approval. Actions are always initiated by users, not by the system.

This ensures that accountability remains with the people managing the project.

Bias: Ensuring AI works for every role on the jobsite

AI systems reflect the data they are trained on. In construction, this can result in outputs that are more relevant to certain roles, such as general contractors, while being less useful for subcontractors or specialty trades.

To mitigate this, Fieldwire uses diverse datasets that reflect a wide range of project types and roles. Outputs are continuously evaluated to ensure they remain relevant across different users and workflows.

This allows for technical accuracy and practical usefulness for everyone involved in the project.

Building AI that construction teams can trust

AI has enormous potential to improve productivity, reduce manual work, and surface insights that were previously impossible to access quickly. But in an industry where precision, security, and accountability matter, that innovation must be built responsibly.

A responsible approach to AI requires more than performance. It requires clear boundaries around data, strong safeguards against errors and misuse, and transparency in how outputs are generated.

At Fieldwire, this approach is guided by a few core principles: limiting data exposure, validating outputs before they reach users, maintaining system security, and ensuring that people remain in control of decisions.

For teams working in environments where AI use is restricted, these features can also be disabled at the account level, allowing companies to adopt new capabilities at their own pace.

By addressing these concerns head-on, we’re building AI that construction professionals can trust. Tools designed not just to automate tasks, but to help teams build smarter, safer, and more efficiently every day.

Read practical insights into how construction teams are using AI today, from real workflows to measurable impact. Download our AI on the jobsite report.